How Bids Lose Credibility Before They Are Even Read

What missing structure, vague claims, and weak evidence signal to buyers.

Most bids do not lose on the quality of their writing. They lose on what the evaluator sees, or fails to see, before the writing is assessed at all.

Government evaluation frameworks across Australia, New Zealand, and the United Kingdom follow a similar sequence: compliance first, then structure, then evidence, then substance. By the time an evaluator actually reads your methodology or value proposition, they have already formed a working judgment about whether your organisation can deliver. That judgment is shaped almost entirely by structural signals you may never have considered, and it is very difficult to reverse once set.

APMP industry benchmarks consistently place the average tender win rate between 39% and 45%. The gap between high performers and everyone else has surprisingly little to do with talent or sector expertise. It comes down to process, structure, and the discipline to treat response quality as an engineering problem rather than a writing exercise.

Compliance Is The First Gate, And It Is Binary

In Australian Commonwealth procurement, the rules leave no room for interpretation. If a tender response does not clearly demonstrate how it meets mandatory criteria, the buyer must set it aside. There is no discretion, no second chance, and no partial credit for intent. A non-compliant bid is excluded before evaluation begins.

The same principle holds across New Zealand and UK public sector procurement. The New Zealand Government’s evaluation methodology guidance publishes the standard 0 to 5 scoring scale used across government procurement, and its definitions are precise. A score of zero means the submission does not meet the requirement, does not comply, or provides insufficient information to demonstrate capability. A score of three, which merely qualifies as “acceptable,” already requires demonstrated ability with supporting evidence.

What this means in practice is that the first thing an evaluator does is check whether you followed the instructions. Did you return all mandatory documents, complete every section, address each criterion in the order requested, and meet the formatting requirements? These are structural questions with nothing to do with how well you write, and they determine whether your bid reaches the scoring table at all.

Evaluators Score Bids. They Do Not Read Them.

Lohfeld Consulting, a US government proposal consultancy that has supported over 550 proposals worth more than $170 billion in contract value, describes evaluator behaviour in terms that should unsettle any team relying on narrative quality alone. Source Selection Board evaluators do not read proposals sequentially. They score them against a criteria matrix, searching for specific keywords and evidence aligned to evaluation factors. In many cases, the evaluation panel is divided into teams, each responsible for a different section, and some evaluators never see the full document.

The consequences for response architecture are significant. If your bid reads like a continuous narrative, the evaluator assigned to Section 3 has no context from Section 1. If your evidence sits buried in prose rather than surfaced through clear structure, the evaluator scanning for proof of delivery capability may never find it. Shipley Associates, the world’s largest bid consultancy, frames this as a foundational principle: people are lookers first, then readers. Their research shows evaluators remember roughly twice as much from a graphic as from text alone, and when they both see a graphic and read the accompanying explanation, recall increases sixfold.

The implication is uncomfortable but clear: A well-structured bid with visible compliance mapping, explicit headings, and evidence positioned exactly where the evaluator expects it will outscore a better-written bid that forces the reader to hunt for the answers.

Vague Claims Produce Zero Scores

Every government scoring framework defines high scores in terms of evidence, and low scores in terms of its absence. The UK Cabinet Office’s Bid Evaluation Guidance Note defines a score of zero as providing no evidence of expertise or experience, and a score of one as evidence that is weak or incomplete. South Australia’s tender evaluation guidelines require high-scoring responses to contain compelling evidence that can be readily verified by the panel, with no omissions and no major weaknesses. Low scores are described as ‘manifestly inadequate’.

We have written previously about how evaluators choose the least risky option, and the mechanism here is the same. Vague language does not just fail to persuade. It actively increases perceived delivery risk, because an evaluator reading unsupported claims will reasonably ask: if this organisation had the evidence, why did they not present it?

First Impressions Compound Through The Entire Evaluation

Evaluators are human, and humans form impressions early. An evaluator who opens a bid and immediately sees a compliant structure, clear headings, and evidence placed exactly where expected will approach the rest of the document with a degree of confidence in the supplier behind it. An evaluator who opens a bid and finds missing sections, generic language, or a structure that does not match the evaluation criteria will carry that doubt into every page that follows, even pages where the content itself may be strong.

This is how credibility compounds. A well-structured opening earns the evaluator’s trust, and trust creates a reading posture where subsequent claims are more likely to be received favourably. A poorly structured opening does the opposite: it puts the evaluator on alert, scanning for further confirmation that this supplier is not across the detail.

Once that lens is set, strong content in Section 5 cannot undo weak structure in Section 1. The evaluator is not starting fresh with each criterion, they are building a cumulative picture of whether this organisation can deliver, and every signal in the document either reinforces or undermines that picture. Which is why compliance architecture, evidence presentation, and response structure are the scoring infrastructure of the bid itself.

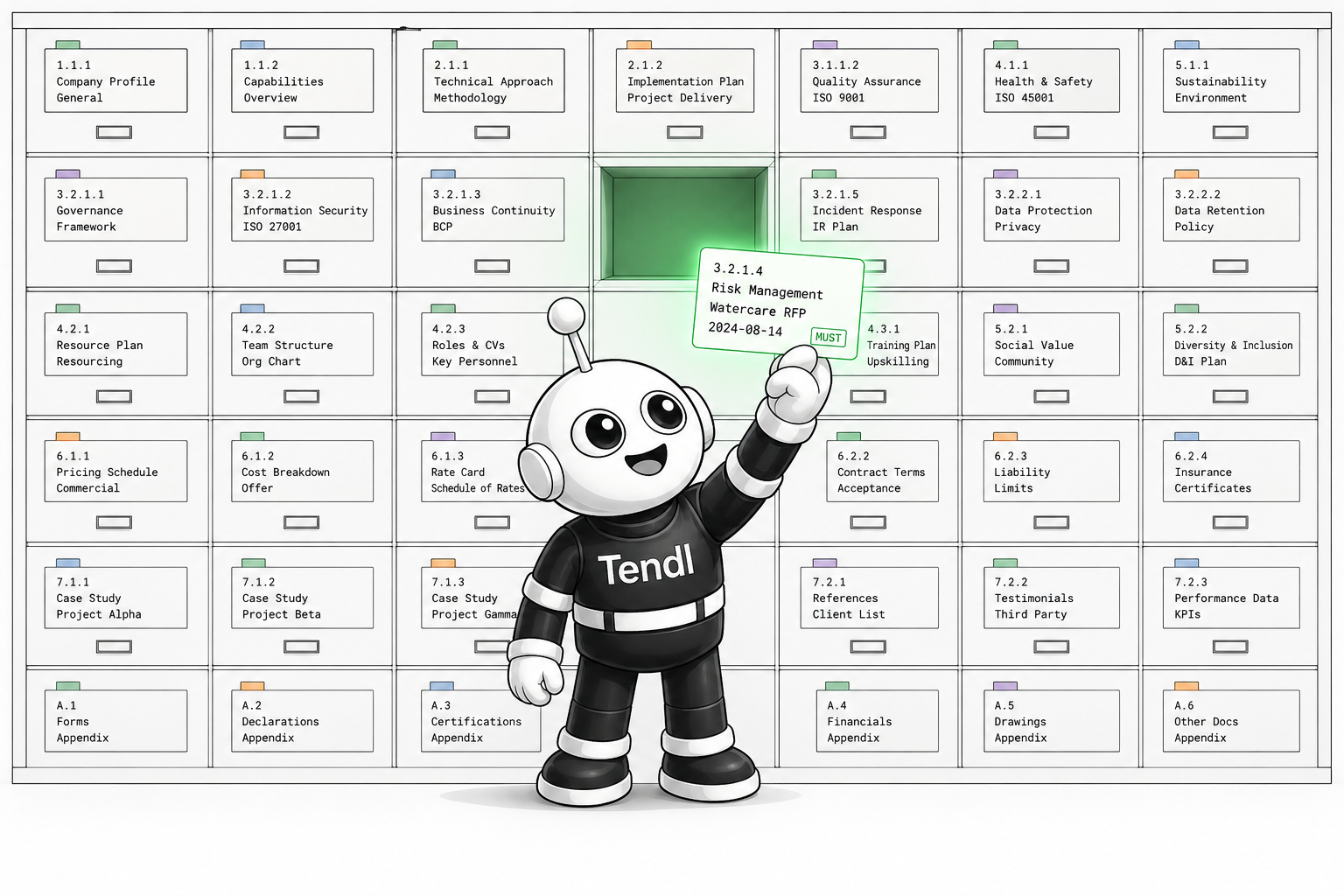

What This Means For Bid Teams

Most organisations are investing their bid effort in the wrong place. Teams spend the overwhelming majority of their time on drafting, often under deadline pressure, starting from scratch, or reworking content from a previous submission that was never properly governed. The work that actually determines scores, including compliance mapping, evidence retrieval, response architecture, and structured proof points, is either rushed at the start or patched together at the end.

Organisations that reverse this emphasis consistently outperform. The pattern holds across every jurisdiction and sector: teams that invest in structured qualification, formal review gates, and governed evidence libraries win more work than teams that pour the same hours into drafting under pressure. The system that produces the prose matters more than the prose itself.

The bid document is the first deliverable of a new contract, and evaluators treat it that way. Structure, compliance, and evidence are signals of operational maturity, governance capability, and delivery credibility. If your bid loses credibility before it is read, the writing never gets a chance to win it back.